About my notebook

This is my lab notebook. It is how I organize and document my scientific research on a day-to-day basis. It is intended primarily for my personal use as a permenant record of my work.

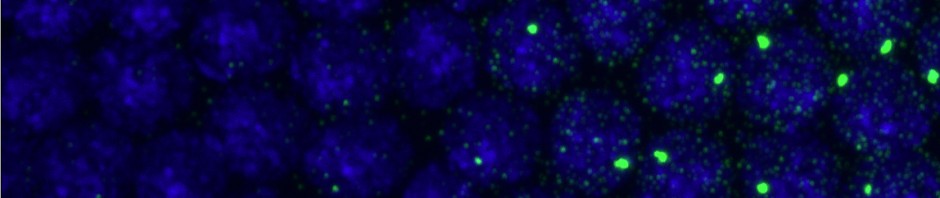

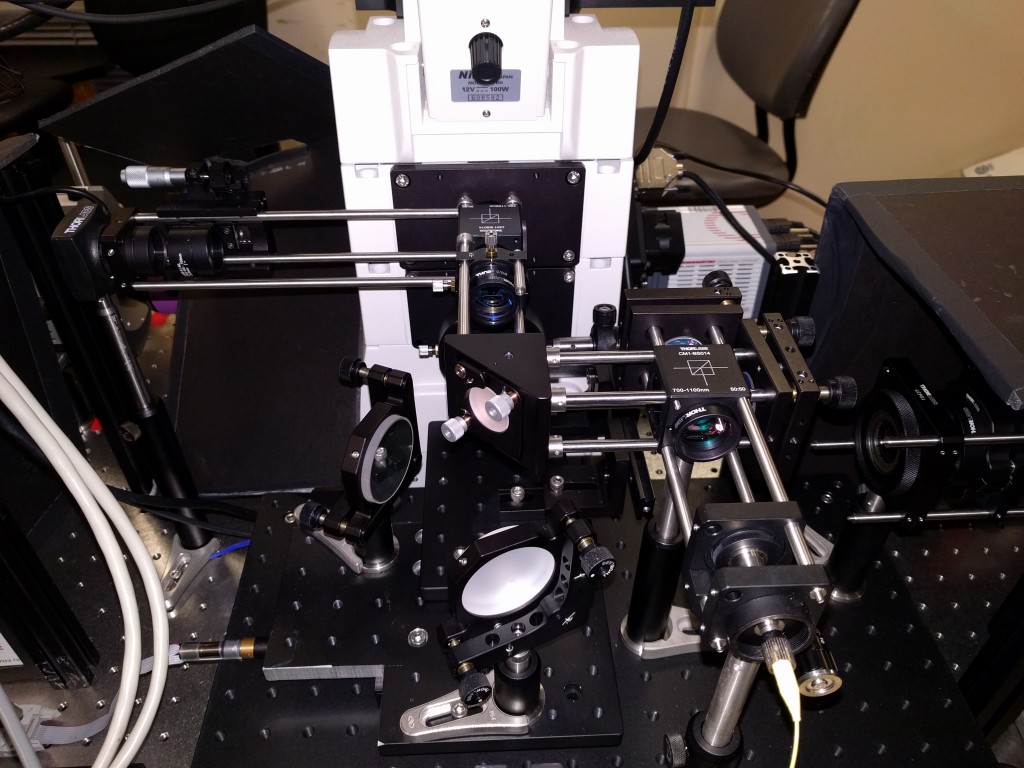

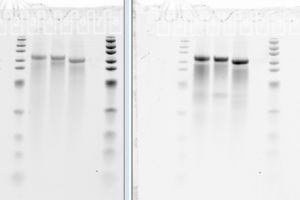

Unlike traditional notebooks, it is text searchable. All notes are listed in appropriate categories, and I can easily rearrange my notebook to display only posts relating to a particular category. Research images, from gel photos to simulation results to draft figures for manuscripts are included as images, and automatically become part of a date-tagged browsable image collection. Hyperlinks let me connect protocols and references.

My notebook also automatically tracks my code development, through RSS feed forwarded by my code repository in Github. The notebook additionally keeps track of what I’m reading, through RSS feed run through Mendeley (when the API works).

In addition to being more convenient, I hope this notebook contributes in a small way to making Science more Open. Much of what we do as academic researchers is never published, and these findings (though funded generally by tax payer dollars) are forever lost to the world. Much of what is published is only available in expensive, specialized journals which most of the world does not have access to. It is my hope, and that of the Open Science Movement, that the technologies of Web2.0 enable us to move to a world where this is no longer the case. In an open or partially open web hosted notebook, all the unpublished ideas and discoveries can still be easily released to the world, where they are text-searchable and easily discoverable, so that other researchers can benefit from them.

Unpublished information that I am not yet ready to share with the world at large is protected by passwords. These can be shared with individual collaborators of mine, and removed once the relevant work is published.

I have received much inspiration, encouragement, and technical support from several individuals. Most importantly, my brother Carl Boettiger, who is a leading figure in the Open Science Movement at Davis, and keeps a Open Notebook here.